TL;DR:

- Manual interview feedback is often inconsistent and biased due to memory decay and personal judgment.

- Automation provides real-time, standardized scoring that improves reliability and predictive accuracy.

- Implementing responsible AI involves clear rubrics, bias audits, human oversight, and a feedback loop to improve hiring quality.

Manual interview feedback feels thorough until you realize two interviewers scored the same candidate 30 points apart. That gap isn’t a fluke. It’s a structural problem baked into every process that relies on memory, personal judgment, and rushed notes. AI outperforms humans in consistency and predictive validity, but only when designed with the right safeguards. This article walks recruiters and hiring managers through why manual feedback fails, how automation fixes it, what risks to watch for, and how to build a system that actually improves hiring decisions.

Table of Contents

- The limitations of manual interview feedback

- How automation improves interview feedback

- Addressing risks: bias and edge cases in automated feedback

- Implementing automated feedback: best practices for recruiters

- What most articles miss about interview feedback automation

- Take the next step with automated interview feedback

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Manual feedback limitations | Subjectivity and inconsistency in manual feedback can slow hiring and reduce reliability. |

| AI boosts consistency | Automated feedback delivers more standardized scores and actionable insights, improving hiring outcomes. |

| Risk mitigation essential | Regular validation, bias audits, and human oversight are crucial to avoid pitfalls of automation. |

| Practical adoption steps | Recruiters should set clear rubrics, incorporate human checks, and monitor for edge cases. |

| Strategic human-AI partnership | Blending AI strengths with human judgment leads to the best interview feedback results. |

The limitations of manual interview feedback

Most hiring teams trust their interviewers. That trust is reasonable, but it doesn’t account for the invisible forces that distort every handwritten scorecard. Manual feedback is shaped by memory decay, personal affinity, and the order in which candidates were seen. The interviewer who met six people in one afternoon is not scoring the last candidate with the same mental energy as the first.

The most documented problem is central tendency bias, where evaluators cluster scores around the middle to avoid conflict or because they lack confidence in extreme ratings. Manual feedback often suffers from central tendency bias and a lack of nuance, which means the scores you collect may tell you less than you think. A candidate who genuinely excels or genuinely struggles gets flattened into the same mid-range score as everyone else.

Beyond bias, there’s the documentation problem. Interviewers fill out feedback forms after back-to-back meetings, sometimes hours later. Details blur. Specific answers get replaced with general impressions. The result is feedback that describes how a candidate felt rather than what they actually said.

Here’s what manual feedback consistently gets wrong:

- Timing: Notes written hours after an interview reflect memory, not performance

- Consistency: Different interviewers use the same rubric words to mean different things

- Aggregation: Combining scores across panels requires manual effort and often loses context

- Actionability: Vague comments like “strong communicator” don’t help candidates improve or help managers decide

As one assessment researcher put it:

“The problem isn’t that humans are bad evaluators. It’s that the conditions we evaluate in are almost perfectly designed to introduce error.”

For teams hiring at scale, these errors compound. A startup interviewing ten people a month can absorb some inconsistency. A company running 200 interviews a month cannot. That’s where exploring AI tools for interview feedback starts to make serious operational sense.

The deeper issue is that manual feedback rarely feeds back into the process. Scores get filed, hiring decisions get made, and no one checks whether the interviewers who rated candidates highest actually predicted job success. Without that loop, you can’t improve. Automation changes that.

How automation improves interview feedback

Automated feedback systems don’t just speed things up. They change the quality of information you collect. When scoring happens in real time against a fixed rubric, you eliminate the memory problem entirely. The system captures what was said, not what the interviewer remembers.

Empirical benchmarks show AI outperforms humans in both consistency and predictive validity, meaning AI scores are more stable across evaluators and better at predicting actual job performance. That’s a significant finding for any team that takes hiring quality seriously.

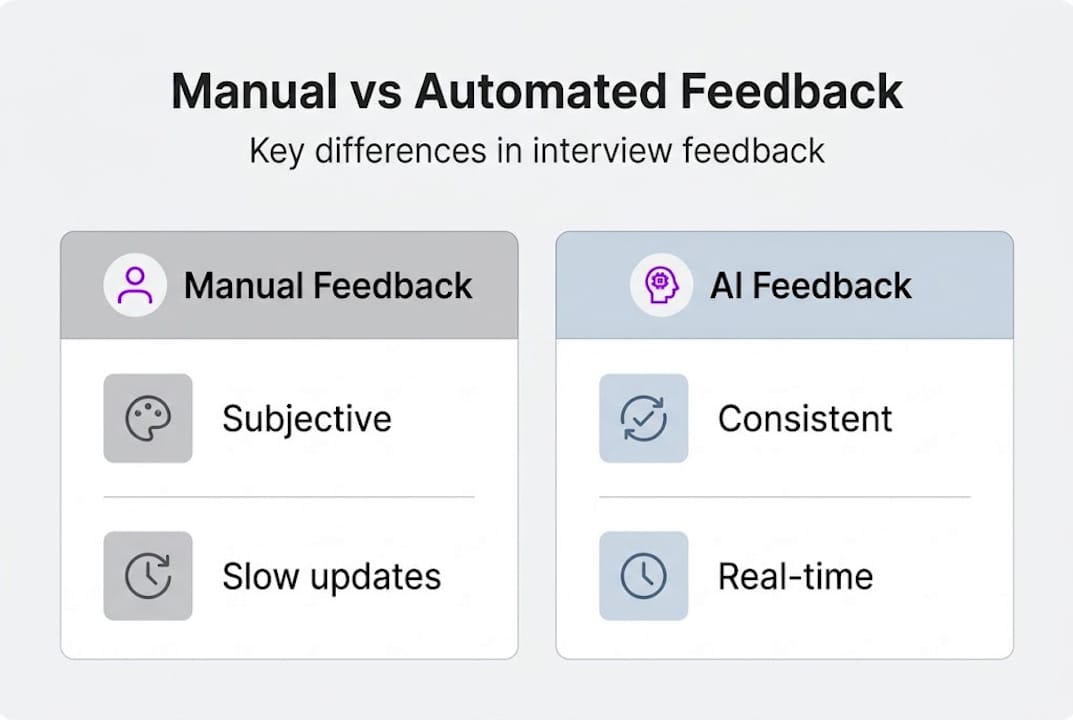

Here’s a direct comparison of manual versus automated feedback across key dimensions:

| Dimension | Manual feedback | Automated feedback |

|---|---|---|

| Timing | Hours or days after interview | Real time or near real time |

| Consistency | Varies by interviewer | Standardized across all sessions |

| Bias detection | Difficult to audit | Auditable with scoring logs |

| Aggregation | Manual, error-prone | Automatic, queryable |

| Actionability | Often vague | Tied to specific rubric criteria |

The practical steps for implementing automated scoring follow a clear sequence:

- Define your rubric first. Automation amplifies whatever criteria you give it. Vague criteria produce vague scores.

- Calibrate with sample interviews. Run the system against recorded or simulated sessions before going live.

- Set scoring thresholds. Decide in advance what score ranges trigger human review versus automatic progression.

- Integrate with your ATS. Scores should flow directly into your applicant tracking system to avoid manual data entry.

- Review outliers weekly. Flag scores that fall outside expected ranges and investigate the cause.

The real-time AI interview impact goes beyond speed. When feedback is generated during the session, interviewers can probe weaker areas immediately rather than discovering gaps after the candidate has left the building.

Pro Tip: Don’t automate everything at once. Start with one interview stage, such as a structured phone screen, and validate accuracy before expanding to technical or behavioral rounds. Teams that use AI for interview feedback incrementally report fewer calibration issues and faster adoption.

Addressing risks: bias and edge cases in automated feedback

Automation is not a bias-free zone. It’s a bias-transfer zone. Whatever assumptions are embedded in your training data or rubric design will be reproduced at scale, faster and more consistently than any human could manage. That’s a risk worth taking seriously.

AI may struggle with nuance, non-native speakers, and neurodivergence, and risks bias if not validated. A system trained primarily on responses from one demographic group will score atypical communication styles lower, not because those candidates are less qualified, but because the model doesn’t recognize their patterns as competent.

Here’s a breakdown of the most common risk categories:

| Risk type | Description | Mitigation |

|---|---|---|

| Training data bias | Model reflects historical hiring patterns | Audit training data for demographic skew |

| Rubric ambiguity | Vague criteria score inconsistently | Define behavioral anchors for each level |

| Language barriers | Non-native speakers penalized for fluency | Separate content scoring from fluency scoring |

| Neurodivergent candidates | Atypical pacing or structure flagged as weak | Include diverse response patterns in calibration |

| Over-central tendency | Scores cluster in the middle | Check score distribution monthly |

Key mitigation strategies include:

- Run inter-rater reliability checks by comparing AI scores to human scores on the same sessions

- Conduct quarterly bias audits across gender, ethnicity, and disability status

- Build an appeals process so candidates can request human review

- Flag low-confidence scores automatically for human follow-up

Validate AI systems psychometrically with rubrics, inter-rater checks, and bias audits before any system goes live. This isn’t optional compliance work. It’s the difference between a system that improves hiring and one that scales your existing blind spots.

Pro Tip: Review soft skills interview tips alongside your rubric design. Soft skill criteria are the most likely to encode cultural assumptions, and improving remote interview performance often starts with clearer behavioral definitions rather than better technology.

Human oversight isn’t a fallback for when automation fails. It’s a permanent feature of a well-designed system.

Implementing automated feedback: best practices for recruiters

Knowing automation works is one thing. Building a system your team will actually use is another. The gap between pilot and production is where most implementations stall. Here’s how to close it.

Start with these five foundational steps:

- Write behavioral rubrics before selecting a tool. The rubric drives everything. Define what a “strong” answer looks like at each competency level with concrete examples.

- Run a parallel scoring period. For the first four to six weeks, have both humans and the AI score the same interviews. Compare results and resolve gaps.

- Train interviewers on the system, not just the output. Interviewers who understand how scores are generated are more likely to trust and act on them.

- Schedule bias audits at fixed intervals. Quarterly is the minimum. Monthly is better for high-volume hiring.

- Create a feedback loop from hire to score. Track whether high-scoring candidates actually perform well on the job. This is the only way to know if your system is working.

Additional implementation considerations:

- Assign a system owner who monitors score distributions and flags anomalies

- Document your validation methodology so you can demonstrate fairness to candidates or regulators

- Set clear escalation paths for edge cases that fall outside normal scoring ranges

- Review candidate experience data alongside scoring data. A technically accurate system that candidates find impersonal will hurt your employer brand

Stat to know: Psychometric validation with rubrics, inter-rater reliability, and bias audits is the recommended standard before any AI interview system goes live.

Exploring top AI models for interviews can help you match the right model capability to your specific rubric complexity. And reading up on AI-powered interview guidance gives you a clearer picture of what to expect from real-world implementations.

What most articles miss about interview feedback automation

Most coverage of automated feedback focuses on efficiency. Faster scores, less admin work, quicker decisions. That framing misses the bigger shift entirely.

The real value of automation is strategic intelligence, not speed. When AI surfaces patterns across hundreds of interviews, you can see things that are invisible at the individual level. Which rubric criteria actually predict 90-day retention? Which interview stages are redundant? Which interviewers consistently score outliers that later become top performers?

Those questions can’t be answered by a human reviewing twenty scorecards. They require aggregated, structured data collected consistently over time. Automation makes that possible.

But here’s the uncomfortable part: blind automation breeds risk. Teams that treat AI scores as final answers rather than structured inputs are offloading judgment to a system that cannot understand context. The AI interview assistance perspective worth adopting is one where automation handles pattern recognition and humans handle interpretation.

This also redefines what good recruiters do. Less time scoring. More time asking: what does this data mean, and what should we change?

Take the next step with automated interview feedback

You’ve seen why manual feedback fails at scale, how automation addresses those gaps, and what it takes to implement it responsibly. The next step is finding tools that match how your team actually works.

MeetAssist brings real-time AI assistance directly into your interview workflow, supporting platforms like Google Meet and Microsoft Teams with live transcription, scoring support, and structured feedback generation. You can compare AI interview assistants to find the right fit for your process, or go straight to the MeetAssist setup guide to see how quickly you can get a structured feedback system running. Better hiring decisions start with better data, and that starts with the right tools.

Frequently asked questions

Does automated interview feedback reduce bias?

Automated feedback can minimize certain biases by applying consistent criteria, but AI must be validated for bias across minority groups and audited regularly to ensure fairness over time.

How does AI scoring compare with human feedback for interviews?

AI outperforms humans in consistency and predictive validity in aggregate, but it lacks the contextual judgment that human reviewers bring to complex or atypical responses.

What are best practices for automating interview feedback?

Use clear behavioral rubrics, incorporate human oversight at key decision points, and follow psychometric validation with bias audits before going live with any automated system.

Can automated feedback handle edge cases like neurodivergence or language barriers?

AI systems often struggle with nuance in these situations, so regular audits and a clear human review process for flagged scores are essential safeguards.

Recommended

- AI in job interviews: tools, ethics, and success tips | MeetAssist

- Technical Interview Automation: Real-Time AI Impact – MeetAssist | MeetAssist

- How to use AI in interviews for job success in 2026 | MeetAssist

- What are AI answer suggestions? A guide for job seekers | MeetAssist

- Top automation strategies for enterprises to boost efficiency

Looking for help with your next interview? MeetAssist provides real-time AI assistance during your video interviews on Google Meet, Zoom, and Teams. Browse our interview preparation guides to get started.