TL;DR:

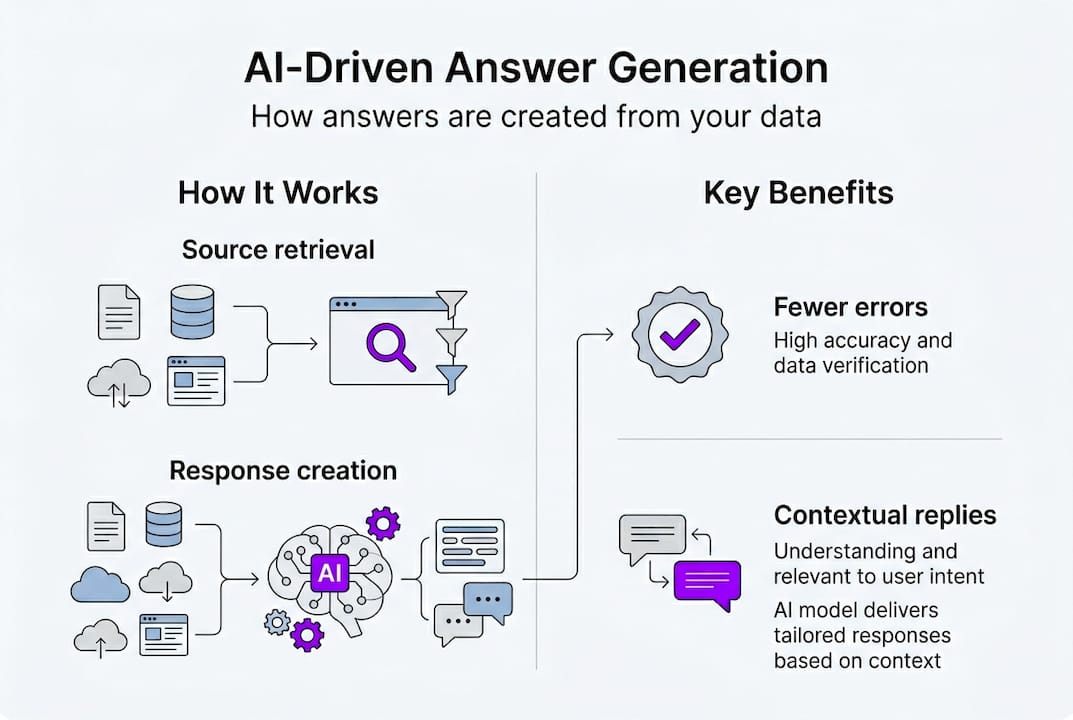

- AI answer generators with Retrieval-Augmented Generation provide personalized, fact-based responses grounded in your data.

- They excel in speed and recall but may produce hallucinations or factual errors in complex scenarios.

- Effective use involves specific prompts, verifying claims, and applying critical thinking beyond AI outputs.

Many people assume AI tools work like a smarter Google, scanning the web and returning links. That mental model is outdated. Today’s AI-driven answer generation systems read your resume, understand the context of a technical question, and produce a tailored response in seconds. For job seekers preparing for coding challenges, behavioral rounds, or system design interviews, this shift matters enormously. This guide breaks down exactly how these systems work, where they shine, where they stumble, and how you can use them to your advantage without getting burned by their limitations.

Table of Contents

- Understanding AI-driven answer generation

- How does AI-driven answer generation work?

- Benchmarks, strengths, and limitations

- Practical tips for using AI-driven answer generation in interviews

- A fresh perspective: Why critical thinking matters more than ever

- Supercharge your interview prep with MeetAssist

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Grounded, contextual answers | AI-driven systems use real sources to craft responses, making answers more reliable for job interviews. |

| Retrieval-Augmented Generation advantage | RAG combines LLMs and search to reduce errors and improve accuracy over traditional chatbots. |

| Critical verification needed | Even advanced AI can make mistakes, so always verify key facts during interview preparation guides. |

| Maximize AI with smart prompts | Request citations and check context to ensure the AI-generated answer is trustworthy and relevant. |

Understanding AI-driven answer generation

Let’s get clear on what makes this technology so different from simple search or typical chatbots.

AI-driven answer generation refers to systems that use large language models (LLMs) to produce direct, contextual answers to natural language questions by retrieving relevant information and synthesizing it. The key phrase is “synthesizing it.” A keyword search returns ten blue links. An AI-driven system reads those sources, understands your question, and writes you a direct answer.

The technology that makes this possible is called Retrieval-Augmented Generation, or RAG. Here is how to think about it: imagine you have a brilliant friend who, before answering your question, quickly reads the most relevant documents in a library and then speaks to you directly. That is RAG in plain English.

RAG contrasts with pure generative QA by grounding answers in specific sources to reduce hallucinations, unlike traditional keyword search which returns links rather than synthesized answers. This grounding is what makes RAG especially powerful for technical interviews, where accuracy is not optional.

Here is a quick comparison to make the differences concrete:

| Approach | How it works | Output | Best for |

|---|---|---|---|

| Keyword search | Matches terms in documents | List of links | General browsing |

| Plain LLM (e.g., ChatGPT) | Generates from training data | Direct answer, may hallucinate | Brainstorming, drafting |

| RAG system | Retrieves sources, then generates | Grounded, cited answer | Accuracy-critical tasks |

For job seekers, the RAG advantage is real. When you upload your resume and ask for a response to “Tell me about a time you improved system performance,” a RAG-powered tool pulls specific details from your actual experience rather than inventing plausible-sounding but generic content.

Some key benefits of RAG for interview prep include:

- Personalized answers grounded in your actual resume and work history

- Reduced hallucinations because the model cites real source material

- Contextual accuracy that adapts to the specific question being asked

Learning more about how AI answer suggestions work in practice can help you use these tools with more confidence. The bottom line: RAG is not magic, but it is a meaningful step forward from guessing.

How does AI-driven answer generation work?

Now that we have defined AI-driven answer generation, here is how these systems actually craft answers from your resume or technical questions.

The core mechanics involve five steps: content ingestion and embedding into vectors for semantic search, query processing, augmentation, generation, and verification. Each step builds on the last, and skipping any one of them degrades the final answer quality.

Here is what each step looks like in practice:

- Ingestion: Your resume, job description, or technical documentation is broken into chunks and converted into numerical representations called embeddings.

- Retrieval: When you ask a question, the system finds the most semantically similar chunks using a vector database, not just keyword matching.

- Augmentation: The retrieved chunks are added to your question as context before the LLM sees it.

- Generation: The LLM writes a response using both your question and the retrieved context.

- Verification: Some systems check whether the answer is actually supported by the retrieved sources before returning it.

The size of those chunks matters more than most people realize. Typical chunk sizes of 500 to 600 tokens with overlap are standard, and vector databases power the similarity search that makes retrieval fast and relevant.

| Step | Technology used | Impact on answer quality |

|---|---|---|

| Ingestion | Embedding models | Determines what context is available |

| Retrieval | Vector database | Controls relevance of retrieved chunks |

| Generation | LLM (GPT, Claude, etc.) | Shapes fluency and coherence |

| Verification | Grounding checks | Reduces hallucinated claims |

Understanding how RAG works at this level helps you ask better questions and spot weak answers faster.

For interview prep specifically, resume-based answer generation is one of the most practical applications. Instead of generic STAR method examples, you get responses built from your actual projects and roles. Pair that with real-time AI for interviews and you have a system that adapts as the conversation evolves.

Pro Tip: When using any AI answer tool, include a phrase like “cite the source for each claim” in your prompt. This forces the system to ground its response and makes it much easier for you to verify accuracy before your interview.

Benchmarks, strengths, and limitations

Understanding how AI actually performs helps set realistic expectations and reveals strengths as well as risks.

Frontier LLMs are impressive, but the numbers are more sobering than the marketing suggests. Top models score roughly 45% accuracy on challenging QA benchmarks like Humanity’s Last Exam, around 50 to 57% on LongBench v2, and approximately 69% on FACTS grounding benchmarks. For context, humans score around 50 to 54% on some of these same tests. That is not a gap that inspires blind trust.

Here is where AI systems genuinely excel versus where they struggle:

| Capability | AI strength | Human strength |

|---|---|---|

| Speed | Generates answers in seconds | Slower, needs time to think |

| Recall of patterns | Very strong across large datasets | Limited by memory |

| Novel reasoning | Weak on shifted or edge-case problems | Strong with domain experience |

| Nuance and context | Often misses subtle cues | Naturally picks up on tone |

Edge cases expose the real risks: hallucinations (fabricated facts), retrieval failures, context loss, and recitation over reasoning when problems shift mid-conversation. These are not rare bugs. They are predictable failure modes you need to account for.

For technical interviews, the implications are direct. If an AI tool generates a Python solution with a subtle off-by-one error, a confident delivery of that answer can hurt you more than saying “let me think through this.” Check the HLE leaderboard to see how even the best models handle genuinely hard questions.

Some common AI limitations to watch for in interview contexts:

- Confident-sounding answers that contain factual errors

- Outdated information from training data cutoffs

- Generic responses that do not reflect your specific experience

- Reasoning that breaks down when the problem has an unusual twist

Exploring AI tools for interviews with these limitations in mind helps you choose wisely. And using customizable interview answers that let you adjust tone and format gives you more control over what the AI produces.

Pro Tip: Before any interview, test your AI tool on three or four questions you already know the correct answers to. This calibrates your trust level and helps you spot the tool’s specific failure patterns.

Practical tips for using AI-driven answer generation in interviews

Finally, let’s put this knowledge into practice with actionable tips for job seekers and professionals using AI systems in real situations.

The biggest mistake candidates make is treating AI output as a final answer. It is a first draft. Here is how to use it well:

- Write specific prompts. Instead of “How do I answer this coding question,” try “Generate a Python solution for this problem and cite the algorithmic approach used.”

- Request citations in every prompt. Grounded generation tools like Google’s Vertex AI and Semantic Retrieval are built to support this, and prompting for citations forces the system to stay accountable.

- Review for logic, not just language. A fluent answer can still have broken logic. Read it as if you are the interviewer.

- Cross-check any statistic or technical claim. If the AI says a particular algorithm runs in O(n log n), verify it before you say it out loud.

- Practice out loud. Reading an AI answer and delivering it naturally are two very different skills.

From a production standpoint, low temperature settings, self-consistency sampling, and claim verification are the techniques engineers use to reduce hallucinations in deployed systems. You can apply the same logic: prefer tools that show their sources, and treat high-confidence answers with the same scrutiny as uncertain ones.

Here is a quick checklist before using any AI-generated answer in an interview:

- Does the answer directly address the question asked?

- Are any technical claims verifiable?

- Does it reflect your actual experience, not a generic example?

- Can you explain the reasoning behind it in your own words?

Understanding LLM reasoning challenges helps you anticipate where AI tools are most likely to let you down. For building real confidence, boosting interview confidence with structured AI practice is more effective than passive reading. And using AI smartly for interviews means knowing when to trust it and when to override it.

Pro Tip: Pair AI suggestions with your own domain knowledge. If you know the topic well, use the AI to sharpen your phrasing. If you are less certain, use it to identify what you need to study, not to substitute for that study.

A fresh perspective: Why critical thinking matters more than ever

With these tips in mind, it is worth stepping back and asking a harder question: are AI-savvy candidates actually better prepared, or just better at sounding prepared?

The uncomfortable truth is that AI-driven tools can make a candidate sound polished while masking shallow understanding. Hiring managers at technical companies are increasingly aware of this. Many are shifting toward follow-up questions specifically designed to probe whether a candidate actually understands the answer they just gave. An AI-generated response that you cannot explain one level deeper is a liability, not an asset.

The candidates who stand out are not those who use AI the most. They are those who treat AI output as raw material and then build something better from it. They challenge the answer, find the edge case the AI missed, and bring their own experience to the surface. That is the skill that interview automation realities cannot replace.

Critical thinking is not the opposite of using AI. It is what makes AI useful.

Supercharge your interview prep with MeetAssist

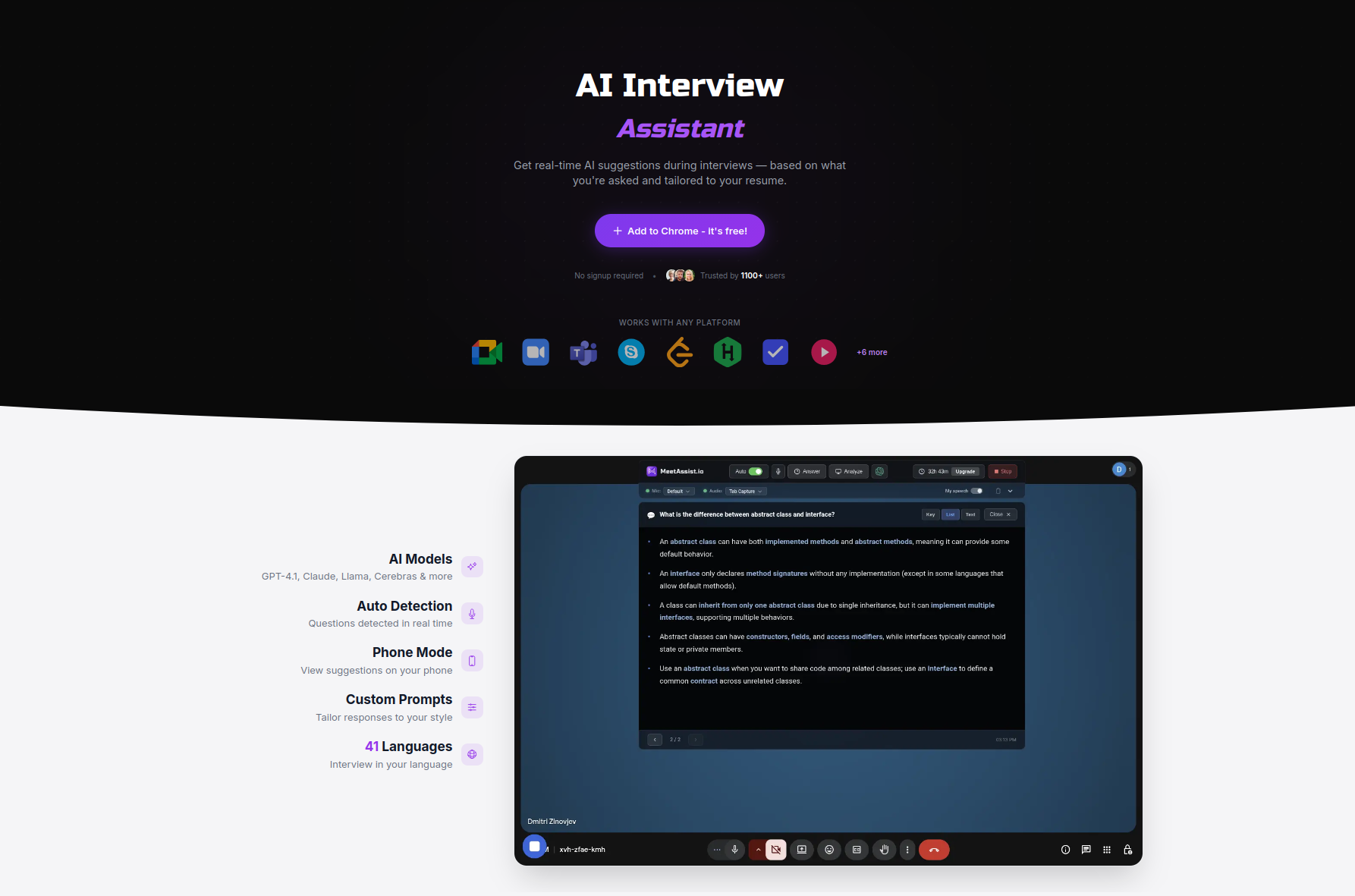

For those eager to level up their interview preparation with these technologies, here is how MeetAssist can help.

MeetAssist applies these exact AI techniques, including real-time retrieval and grounded answer generation, to deliver interview-ready responses as conversations happen. You upload your resume, and MeetAssist personalizes every suggestion to your actual experience. With support for GPT-4.1, Claude, Llama, and Cerebras, you choose the model that fits your needs.

Phone Mode takes it further by moving everything to your phone screen so nothing is visible on your computer during the interview. Explore AI assistant alternatives to see how MeetAssist compares, or check out the MeetAssist guides to start practicing today. Visit MeetAssist to get started with a one-time purchase and no subscription required.

Frequently asked questions

How is AI-driven answer generation different from regular chatbots?

Unlike simple chatbots, AI-driven systems use retrieval and synthesis to ground answers in sources, reducing errors and producing more accurate, contextual responses tailored to your specific question.

Can I trust AI-generated answers for interviews?

AI tools often provide accurate and helpful starting points, but edge cases like hallucinations and retrieval failures mean you should always verify critical information before using it in an interview.

What are common pitfalls when using AI-driven answers?

The most common pitfalls are relying on hallucinated facts from LLMs, misreading vague citations, and failing to verify reasoning when a problem shifts mid-interview.

How can I get the most out of AI-driven answer tools?

Ask specific questions, require citations in your prompts, and use grounded generation tools that let you verify sources, then layer in your own domain knowledge before delivering any answer.

Recommended

- What are AI answer suggestions? A guide for job seekers | MeetAssist

- How to use AI in interviews for job success in 2026 | MeetAssist

- How to Prepare for a Google Interview Using Real-Time AI Assistance

- AI in job interviews: tools, ethics, and success tips | MeetAssist

- How to build an AI-driven personalized sales process | SuperSocial Blog