TL;DR:

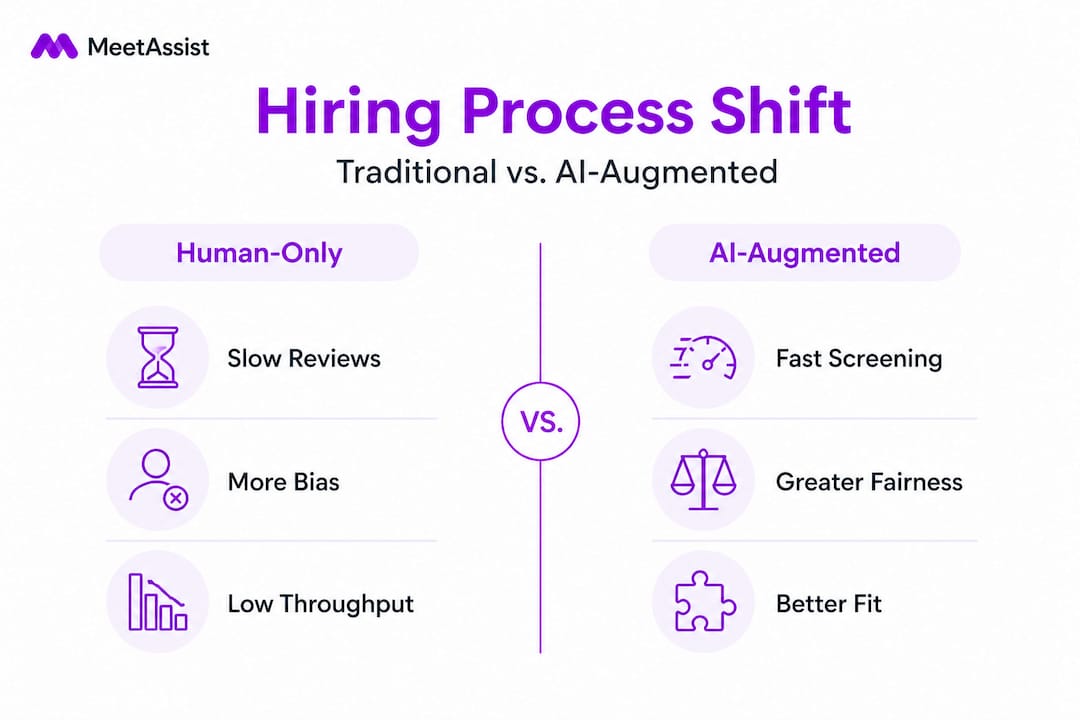

- AI enhances technical hiring by increasing candidate throughput and improving predictive outcomes.

- Structured AI assessments can reduce bias and standardize evaluation, leading to better hires.

- Effective AI adoption requires strategy, transparency, candidate experience considerations, and ongoing monitoring.

Most hiring teams think AI just speeds up resume screening. That assumption is costing them better engineers. A randomized controlled trial found that AI-powered structured video interviews pushed junior developer pass rates from 34% with resume screening alone to 54% at the final human interview stage, while cutting the total number of human interviews by 44% and producing candidates who landed new jobs at nearly twice the rate. That is not automation. That is a fundamental shift in how technical talent gets identified, evaluated, and hired.

Table of Contents

- The evolution of technical hiring with AI

- AI-powered assessment: Efficiency, fairness, and quality-of-hire

- What technical hiring managers should know before adopting AI

- Integrating AI into your technical hiring workflow: Steps and best practices

- Perspective: Why AI in hiring is only as good as your strategy

- Take your technical hiring to the next level with MeetAssist

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI enhances efficiency | AI-driven tools screen more candidates and reduce recruiter workload at lower cost. |

| Improved hiring outcomes | Evidence shows higher-quality matches and better job performance when using AI-powered interviews. |

| Nuanced impact on fairness | AI can mitigate some human biases but requires careful deployment to avoid new risks. |

| Strategic implementation matters | The real gains depend on integrating AI with transparent processes and active human oversight. |

| Continuous improvement required | AI systems need regular review and adjustment to balance efficiency, fairness, and experience. |

The evolution of technical hiring with AI

Traditional technical hiring was a three-stage gauntlet: recruiter screens a resume, a hiring manager runs a phone screen, and a panel conducts a live coding session. Each stage filtered candidates, but the filters were inconsistent. Two recruiters reviewing the same resume could reach opposite conclusions. Coding tests varied by interviewer mood and available time. Bias crept in through name recognition, school prestige, and phrasing choices on a CV.

AI approaches these problems differently. Instead of replicating human judgment at scale, the best tools restructure what gets measured and how consistently it gets measured. This matters especially for technical skill assessments, where the gap between what a resume signals and what a candidate can actually do is notoriously wide.

The throughput gap alone is striking. Research shows that AI processes 3x more candidates per hour at roughly $2.29 per candidate compared to a human recruiter’s 1.07 candidates per hour at $50 each, with comparable precision and recall to expert reviewers. That is not a marginal efficiency gain. It is a structural change in what a recruiting operation can accomplish.

Here is where traditional hiring tends to break down:

- Inconsistent evaluation criteria across recruiters and hiring panels

- High cost per screened candidate, making deep-funnel evaluation economically impractical

- Slow turnaround that causes top candidates to accept competing offers

- Resume-based filtering that penalizes nontraditional career paths

- Interviewer fatigue that degrades decision quality late in the day

| Metric | Traditional hiring | AI-assisted hiring |

|---|---|---|

| Candidates screened per hour | 1.07 | 3.28 |

| Cost per screened candidate | $50.00 | $2.29 |

| Consistency of evaluation | Variable | Standardized |

| Bias surface area | High (multiple humans) | Lower (single model) |

| Time to shortlist | Days to weeks | Hours |

“The most underappreciated benefit of AI in recruiting is not speed. It is the ability to apply the same evaluation standard to the 500th candidate as you did to the first.”

AI personalization for hiring takes this further by tailoring assessment difficulty, question sets, and feedback loops to the specific role and level. When paired with AI-driven assessment strategies, organizations can move from generic job descriptions to role-specific predictive filters that actually correlate with on-the-job performance.

AI-powered assessment: Efficiency, fairness, and quality-of-hire

Efficiency is the easy sell. Fairness is where things get complicated. And quality-of-hire is the metric that ultimately determines whether any of this investment was worth making.

Start with the fairness question, because recent research produces a genuinely counter-intuitive finding: AI scoring systems actually rate underrepresented groups higher than human interviewers do, suggesting that algorithmic evaluation removes some of the subconscious bias that human evaluators carry into every session. At the same time, the same research shows that asynchronous AI-led interviews reduce application continuation by more than 50%, with women disproportionately opting out. That is a real tension. You can have a fairer scoring system while simultaneously creating a process that fewer qualified candidates choose to complete.

Here is how a standard AI-driven assessment typically unfolds:

- Candidate applies and receives an asynchronous interview invitation with instructions and timing expectations.

- AI conducts a structured video or text interview, using the same question set for every applicant at that level.

- Responses are analyzed across dimensions like technical accuracy, communication clarity, and problem-solving process.

- Scores are generated with explanatory annotations, not just numeric outputs.

- Human reviewers receive a ranked shortlist with score breakdowns and flag any edge cases for secondary review.

- Final interviews are conducted by human panels with AI-generated context attached to each candidate file.

This structure matters because it creates accountability at every stage. When an AI tool scores a candidate, hiring managers can see why, which is a major improvement over the black-box nature of a gut-feeling phone screen.

| Dimension | Human-only process | AI-augmented process |

|---|---|---|

| Evaluation speed | Slow | Fast |

| Scoring consistency | Low | High |

| Bias in scoring | High | Reduced |

| Predictive validity | Moderate | Higher |

| Candidate experience | Familiar | Varies by format |

The quality-of-hire numbers are what should get every technical hiring manager’s attention. The same trial data that showed improved pass rates also tracked five-month employment outcomes. Candidates selected through AI-assisted pipelines found new jobs at a 40% rate compared to 23% for those selected through traditional resume screening. Predictive validity, the degree to which an assessment actually forecasts real-world job success, is the hardest metric to improve. AI is doing it.

Understanding the AI interview ethics dimension is equally critical. Candidates increasingly know when they are being evaluated by algorithms, and their trust in the process depends on perceived fairness. The automation impact in interviews goes beyond cost savings. It shapes how your employer brand is perceived in competitive talent markets. You need both efficiency and a candidate experience that does not drive top talent toward companies with warmer pipelines.

For deeper context on how AI business process improvements translate across the hiring function, it is worth studying cross-industry implementations that have had to balance throughput with trust.

What technical hiring managers should know before adopting AI

Adoption without strategy produces expensive disappointment. Most technical hiring managers who struggle with AI hiring tools run into one of three problems: they picked the wrong tool for the wrong stage, they did not account for candidate experience degradation, or they over-indexed on efficiency and stopped measuring quality outcomes.

Before you bring AI into your hiring workflow, consider these practical challenges:

- Tool integration complexity: AI hiring platforms need to connect with your ATS, scheduling systems, and compensation benchmarks. Poor integration creates data silos that undermine the consistency gains you are paying for.

- Regulatory and compliance requirements: Depending on your jurisdiction, automated hiring decisions may trigger legal disclosure requirements. Know your obligations before your first automated rejection.

- Candidate experience expectations: Technical candidates, especially senior engineers, often react poorly to impersonal asynchronous screening. Design your process with the candidate’s perspective in mind.

- Model transparency: Some AI tools give you a score. Others give you a score and an explanation. The second type is dramatically more useful for both defensibility and improvement.

- Bias audit cadence: No AI tool is inherently neutral. Empirical benchmarks show mixed bias results across different tools and implementations. You need a regular audit process, not a one-time check.

Pro Tip: When evaluating AI hiring tools, prioritize vendors who can explain why a candidate scored the way they did, not just that they scored highly. Causal explanations give you actionable feedback for improving both your process and your job descriptions. Predictive black boxes replicate historical patterns without giving you any lever to improve outcomes.

Ensuring your AI tools align with DEI values requires active management. Preparing for AI assessments from the candidate side reveals exactly where processes create unnecessary friction. Running that exercise internally, mapping what your candidates actually experience, surfaces drop-off points before they affect your pipeline. Similarly, reviewing AI-powered online quizzes from the candidate’s perspective gives you a realistic picture of where your technical assessments may be filtering on test-taking skill rather than engineering competence.

The most important thing to resist is the temptation to treat AI adoption as a one-time configuration. Hiring markets shift. Candidate pools change. An AI tool calibrated on last year’s hiring data may be subtly miscalibrated for this year’s roles. Build review cycles into your adoption plan from day one. Check out a structured AI adoption roadmap to structure your rollout with built-in review gates rather than a single launch event.

Integrating AI into your technical hiring workflow: Steps and best practices

A structured implementation approach prevents the most common failure modes. Here is a sequence that reflects what actually works in practice.

- Define your success metrics first. Before selecting a tool, agree on what “better hiring” looks like: time to offer, offer acceptance rate, 90-day retention, or six-month performance reviews. Without a baseline, you cannot measure improvement.

- Audit your current pipeline. Map every stage from job posting to day-one start. Identify where candidates drop out, where decisions take longest, and where your team spends the most time on low-value tasks.

- Select AI tools for your highest-friction stages. Resume screening and initial technical screening are the most common starting points because the volume is highest and the value of consistency is clearest.

- Run a pilot with a defined scope. Apply AI tools to a single role or department for 60 to 90 days. Track both efficiency metrics and quality outcomes in parallel.

- Collect candidate feedback systematically. Send a two-question survey after every AI-mediated stage: was the process clear, and did it feel fair? This data is essential for iteration.

- Iterate based on outcome data. After your pilot, review pass-through rates by demographic, compare early indicators to performance data, and adjust scoring weights or question sets accordingly.

AI-powered structured video interviews have shown that when implemented correctly, pass rates to final human interviews can improve from 34% to 54% while simultaneously reducing the total number of human interviews by 44%. That is a significant compounding gain: better candidates move forward, and your technical panel spends less time reviewing weaker fits.

Best practices for ongoing monitoring include:

- Monthly pipeline audits reviewing pass rates by role, level, and demographic segment

- Quarterly calibration sessions where hiring managers review AI scores alongside their own assessments to identify systematic divergences

- Annual bias testing using matched candidate profiles to detect drift in scoring behavior

- Continuous feedback loops from new hires about whether the AI process matched the actual job

Pro Tip: Implement candidate feedback surveys at every AI-mediated stage, not just at the end. Early-stage experience data tells you whether your AI is filtering qualified candidates before your team ever sees them. This is the single most underused quality signal in technical hiring.

For guidance on AI-powered interview guidance that helps both candidates and evaluators prepare effectively, and for insight into how AI for coding challenges is reshaping technical assessment design, these resources offer practical detail for teams in the implementation phase.

Perspective: Why AI in hiring is only as good as your strategy

Here is the uncomfortable truth most vendor conversations skip: the organizations seeing the worst outcomes from AI hiring tools are not using bad technology. They are using good technology badly.

The popular narrative positions AI as either a silver bullet or an ethical disaster. Neither framing is useful. The actual story is more nuanced. AI gives you better inputs for human decisions. It does not replace the decisions. When technical hiring managers treat AI as a decision-maker rather than a decision-support system, they stop asking whether the output makes sense. They stop catching the edge cases. They stop noticing when the model is confidently wrong.

Over-reliance on predictive AI is a real risk. A model trained on historical hiring data learns what your past hires looked like, not what your best future hires could look like. If your historical hires came predominantly from a narrow set of schools or backgrounds, an unchecked predictive model will encode that pattern as a feature rather than a bug. The real-time AI impact on hiring quality is maximized when AI surfaces candidates human reviewers would have missed, not when it confirms the patterns that already existed.

The processes that consistently outperform are the ones that blend AI-generated signals with transparent, explainable frameworks and deliberate human review. The AI screens for demonstrated skills and consistent communication. The human reviews for judgment, collaboration signals, and cultural contribution. Neither replaces the other. Both are accountable.

Lead with intent. Be explicit about what you want AI to do and what you reserve for human judgment. Measure both sides. Share results with your team. The technical hiring managers who will build the best teams over the next five years are not the ones who adopt AI fastest. They are the ones who use it most thoughtfully.

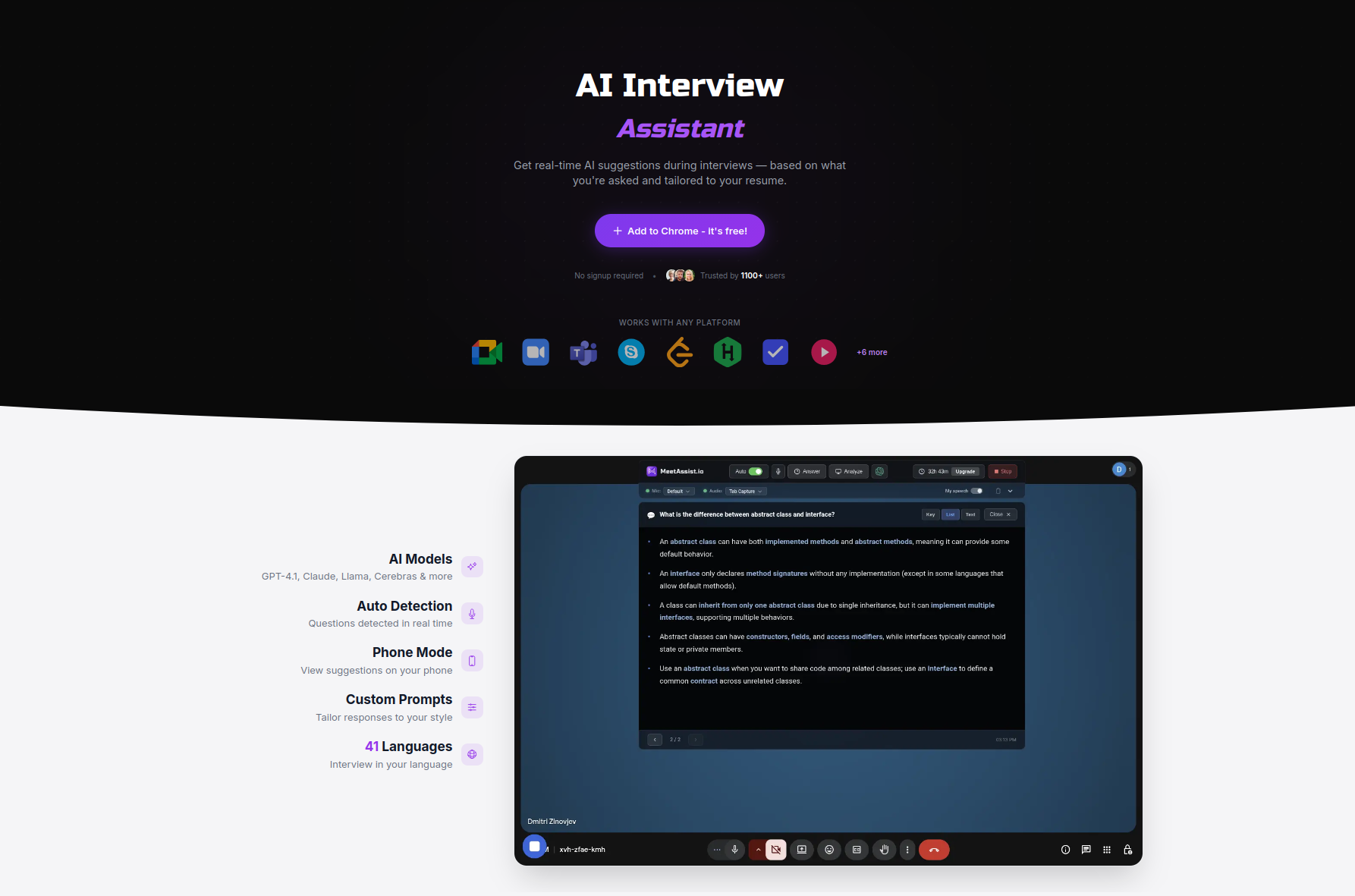

Take your technical hiring to the next level with MeetAssist

Having explored both the transformative impacts and practicalities of AI-driven hiring, the next step is putting these strategies into practice with tools built specifically for the challenge.

MeetAssist is purpose-built for the moments where AI assistance matters most: live technical interviews, coding challenges, and real-time assessment scenarios. Whether you are evaluating candidates or helping your team prepare for high-stakes evaluations, MeetAssist provides real-time AI suggestions, STAR-method formatting, and seamless integration across Google Meet, Teams, and Zoom. You can compare top AI interview tools to see how MeetAssist positions against other platforms, or explore the how to use MeetAssist guide to see how fast your team can operationalize it. One-time purchase, no subscription, and full privacy by design.

Frequently asked questions

Does AI actually improve the quality of technical hires?

Yes. AI-powered structured interviews predicted better job outcomes at a 40% rate versus 23% for traditional resume screening, based on five-month employment tracking from a randomized trial.

How does AI impact bias in technical hiring?

AI scoring tools have been shown to rate underrepresented groups higher than human interviewers, but automated interview formats reduce application continuation rates by more than 50%, particularly among women, creating a different kind of access problem.

Are AI-driven resume screeners more cost-effective than humans?

Significantly so. AI screens 3.28 candidates per hour at $2.29 each, compared to a human recruiter’s 1.07 per hour at $50, with comparable accuracy to expert reviewers.

What are the main risks of adopting AI for hiring?

The largest risks are perpetuating historical bias through predictive models trained on non-diverse past hires, and designing candidate experiences that drive qualified applicants to disengage before human review.

What should I look for in an AI hiring solution?

Prioritize tools that offer explainable scoring rather than black-box outputs, built-in bias audit capabilities, and human override mechanisms at every automated decision point.

Recommended

- AI-driven assessment explained: Prep smarter for technical interviews | MeetAssist

- AI in job interviews: tools, ethics, and success tips | MeetAssist

- Technical Interview Automation: Real-Time AI Impact – MeetAssist | MeetAssist

- Why use AI for Microsoft Teams: boost interview success | MeetAssist

Looking for help with your next interview? MeetAssist provides real-time AI assistance during your video interviews on Google Meet, Zoom, and Teams. Browse our interview preparation guides to get started.