You’re preparing for a remote technical interview and suddenly wonder: what happens to my video, voice, and behavior data once AI starts analyzing me? Many job seekers feel uncertain about how AI is used in interviews and what happens to their data. Understanding AI interview privacy helps you participate confidently and safeguard your personal information. This guide explains AI interview privacy, covers risks and protections, and offers practical tips for interviews.

Table of Contents

- Key takeaways

- Understanding AI interview privacy: what it means for you

- Common privacy risks and challenges in AI-assisted interviews

- How to protect your privacy during AI interviews: practical strategies

- Comparing AI interview platforms: privacy features to look for

- Explore MeetAssist’s privacy-conscious AI interview tools

- FAQ

Key Takeaways

| Point | Details |

|---|---|

| Data captured during interviews | AI interviews collect audio video text and behavioral data that may be analyzed to assess skills and suitability. |

| Indefinite data storage | Data can be stored indefinitely on company or vendor servers and may be shared beyond the hiring decision. |

| Consent and policy clarity | Clear consent terms and policies reveal how data may be used or shared beyond the interview. |

| Privacy oriented platforms | Choose platforms that provide strong privacy controls and clear data retention practices to reduce risk. |

Understanding AI interview privacy: what it means for you

AI interview privacy refers to the protection of your personal and behavioral data during technology-assisted hiring processes. When you participate in remote interview assistance platforms, sophisticated algorithms collect and analyze multiple data streams in real time. These systems don’t just record what you say. They capture how you say it, your facial expressions, voice patterns, word choice, and even subtle behavioral signals like eye movement or response timing.

AI interview tools collect audio, video, text, and behavioral data during remote interviews, raising concerns about data usage and protection. This collection happens continuously throughout your session, creating a comprehensive digital profile that goes far beyond a traditional resume or reference check. The algorithms process this information to evaluate your communication skills, emotional intelligence, technical competence, and cultural fit.

Why does safeguarding this data matter so much? Your interview recordings contain deeply personal information that could be misused, shared without consent, or analyzed in ways that introduce bias. Unlike in-person interviews where only human memory captures your performance, AI systems create permanent, searchable records. These digital footprints can follow you across applications if companies share candidate databases or use third-party assessment tools.

Remote interviews amplify privacy concerns compared to in-person meetings. Key differences include:

- Digital recordings stored indefinitely on company or vendor servers

- Automated analysis that may lack human oversight or appeal processes

- Potential access by multiple parties including hiring managers, HR teams, and external consultants

- Cross-border data transfers that complicate regulatory protections

- Integration with other databases that could create comprehensive candidate profiles

The shift to remote hiring accelerated dramatically, but privacy frameworks haven’t kept pace. You need to understand what happens to your data because companies rarely volunteer complete information about their AI systems, retention policies, or secondary uses of candidate information.

Common privacy risks and challenges in AI-assisted interviews

Navigating AI interview privacy means recognizing specific threats that could compromise your personal information or career prospects. Data protection concerns regarding how AI interview platforms store and use candidate information include risks of bias and lack of transparency. These aren’t theoretical problems. They affect real candidates every day.

Data breaches represent the most obvious risk. When interview platforms store thousands of candidate videos and transcripts, they become attractive targets for cybercriminals. A single breach could expose your voice, face, responses to technical questions, and personal information to unauthorized parties. Unlike a leaked email address, you can’t change your biometric data or erase video recordings once they spread.

Unclear data handling policies create another major challenge. Many AI interview platforms bury critical information in dense legal documents or provide vague assurances about data security. You might consent to recording without understanding that your interview could train future AI models, be shared with parent companies, or remain accessible long after the hiring decision.

Continuous monitoring extends beyond the formal interview window. Some systems analyze your behavior during technical assessments, track how you navigate coding challenges, or monitor your screen activity. This surveillance happens without clear boundaries, and you may not know what triggers algorithmic flags or how systems interpret your actions.

AI bias affects fairness and privacy simultaneously. Algorithms trained on historical hiring data can perpetuate discrimination based on accent, appearance, communication style, or other protected characteristics. When biased systems make hiring recommendations, your privacy suffers because irrelevant personal attributes influence decisions that should focus solely on qualifications.

Watch for these specific privacy risks:

- Lack of explicit consent for each data collection type and purpose

- Indefinite data retention without clear deletion timelines or processes

- Third-party access to your interview data for analytics or model training

- Absence of mechanisms to review, correct, or challenge AI-generated assessments

- Vague language about data sharing with affiliates, partners, or future acquirers

- No transparency about which AI models analyze your data or their accuracy rates

Understanding AI privacy risks in technical hiring isn’t paranoia. It’s professional due diligence that protects your personal information and ensures fair evaluation based on your actual skills and qualifications.

The consent challenge deserves special attention. Many platforms present consent as binary: agree to everything or forfeit the interview opportunity. This pressure makes it nearly impossible to negotiate terms or opt out of specific data uses while still participating in the hiring process. You’re forced to choose between privacy and career advancement.

How to protect your privacy during AI interviews: practical strategies

You can take concrete steps to guard your privacy even when AI systems analyze your interview performance. Start by reading privacy policies thoroughly before consenting to any data collection. Look beyond marketing language to find specific details about data retention periods, third-party sharing, and your rights to access or delete information.

Limit data sharing by controlling what AI systems can access:

- Disable unnecessary camera and microphone permissions outside active interview windows

- Ask explicitly about data retention schedules and request the shortest possible timeframe

- Use dedicated devices for interviews rather than personal computers with sensitive information

- Connect through secure, private networks instead of public WiFi that could be monitored

- Close unrelated applications and browser tabs that might be captured or analyzed

Question interviewers directly about AI implementation. Most candidates never ask how their data will be used, which allows companies to maintain opacity. You have every right to understand the technology evaluating you. Request information about which AI tools the company uses, what data those tools collect, how long recordings are stored, and who can access your interview materials.

Technology-based safeguards add another protection layer. Virtual private networks encrypt your connection and mask your location. Privacy screens prevent others from viewing your interview if you’re in a shared space. Encrypted video platforms offer better security than standard consumer tools. Using AI in interviews successfully means balancing technological assistance with data protection.

Pro Tip: Before any AI-assisted interview, email the recruiter or hiring manager asking for a copy of the AI tool’s privacy policy and a clear explanation of how your interview data will be used, stored, and eventually deleted. Most candidates skip this step, but it signals that you take privacy seriously and often prompts companies to provide better transparency or even improved data handling.

Document everything related to your interview privacy. Save copies of privacy policies, consent forms, and any communications about data handling. Take screenshots of privacy settings you configure. This documentation becomes crucial if you later need to request data deletion, file a complaint with regulators, or challenge how your information was used.

Consider the timing of your privacy inquiries. Asking detailed questions about AI privacy after receiving a job offer carries less risk than during initial screening. However, waiting too long means your data has already been collected and analyzed. Strike a balance by asking general questions early and more specific ones as you progress through the hiring process.

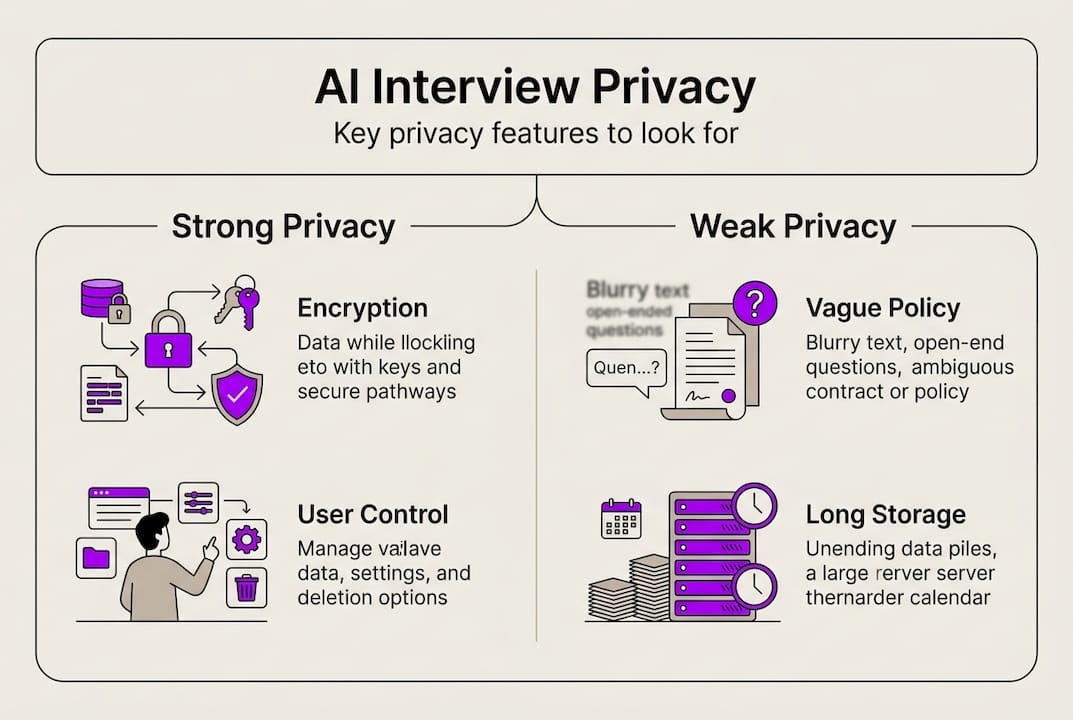

Comparing AI interview platforms: privacy features to look for

Not all AI interview tools treat candidate data equally. When companies give you a choice of platforms or when you’re selecting tools for your own interview preparation guides, evaluate privacy features systematically. Different AI interview platforms vary widely in their privacy measures, and knowing key features helps you select safer tools.

Essential privacy features include end-to-end data encryption that protects your information during transmission and storage. Granular consent mechanisms let you approve specific data uses rather than accepting blanket terms. Minimal data retention policies automatically delete your information after a set period instead of storing it indefinitely. Auditability features let you review what data was collected and how it was used.

| Platform feature | Strong privacy | Weak privacy | Why it matters |

|---|---|---|---|

| Data encryption | End-to-end, at rest and in transit | Basic or unclear encryption | Prevents unauthorized access during breaches |

| Consent model | Granular, purpose-specific | All-or-nothing blanket consent | Gives you control over each data use |

| Retention period | 30-90 days with auto-deletion | Indefinite or unclear retention | Limits exposure window for your data |

| Third-party sharing | No sharing without explicit consent | Broad sharing with partners | Prevents your data from spreading |

| User data access | Download and review your data anytime | No access to your own information | Lets you verify what was collected |

| Deletion rights | On-demand deletion with confirmation | Difficult or impossible to delete | Ensures you can remove your data |

| Regulatory compliance | GDPR, CCPA certified with public audits | Claims compliance without proof | Provides legal recourse if violated |

Regulatory compliance signals that platforms meet minimum privacy standards. The General Data Protection Regulation and California Consumer Privacy Act establish baseline requirements for data handling, consent, and individual rights. Platforms that publicly document their compliance and undergo regular audits demonstrate stronger commitment to privacy than those making vague claims.

Transparency reports reveal how platforms handle data requests, breaches, and user complaints. Companies that publish regular transparency reports show accountability. Look for information about how many data requests they received, how they responded, and any security incidents that occurred.

User control options determine how much power you have over your own data. Strong platforms let you access your complete data file, correct inaccuracies, restrict certain processing activities, and delete everything permanently. Weak platforms make these actions difficult or impossible, leaving you dependent on company goodwill.

Pro Tip: Prioritize platforms that provide clear, accessible privacy documentation written in plain language rather than legal jargon. If you need a lawyer to understand a privacy policy, the platform probably isn’t prioritizing transparency or user empowerment.

Balance functionality with privacy safeguards when choosing AI interview tools. The most feature-rich platform isn’t worth using if it treats your data carelessly. Conversely, a privacy-focused tool that lacks essential interview features won’t serve your needs. Identify your non-negotiable privacy requirements first, then evaluate functionality among platforms that meet those standards.

Compare platforms using independent reviews and user experiences, not just marketing claims. Companies often overstate their privacy protections while downplaying risks. Third-party security assessments, user testimonials about data handling, and regulatory actions provide more reliable information than vendor promises.

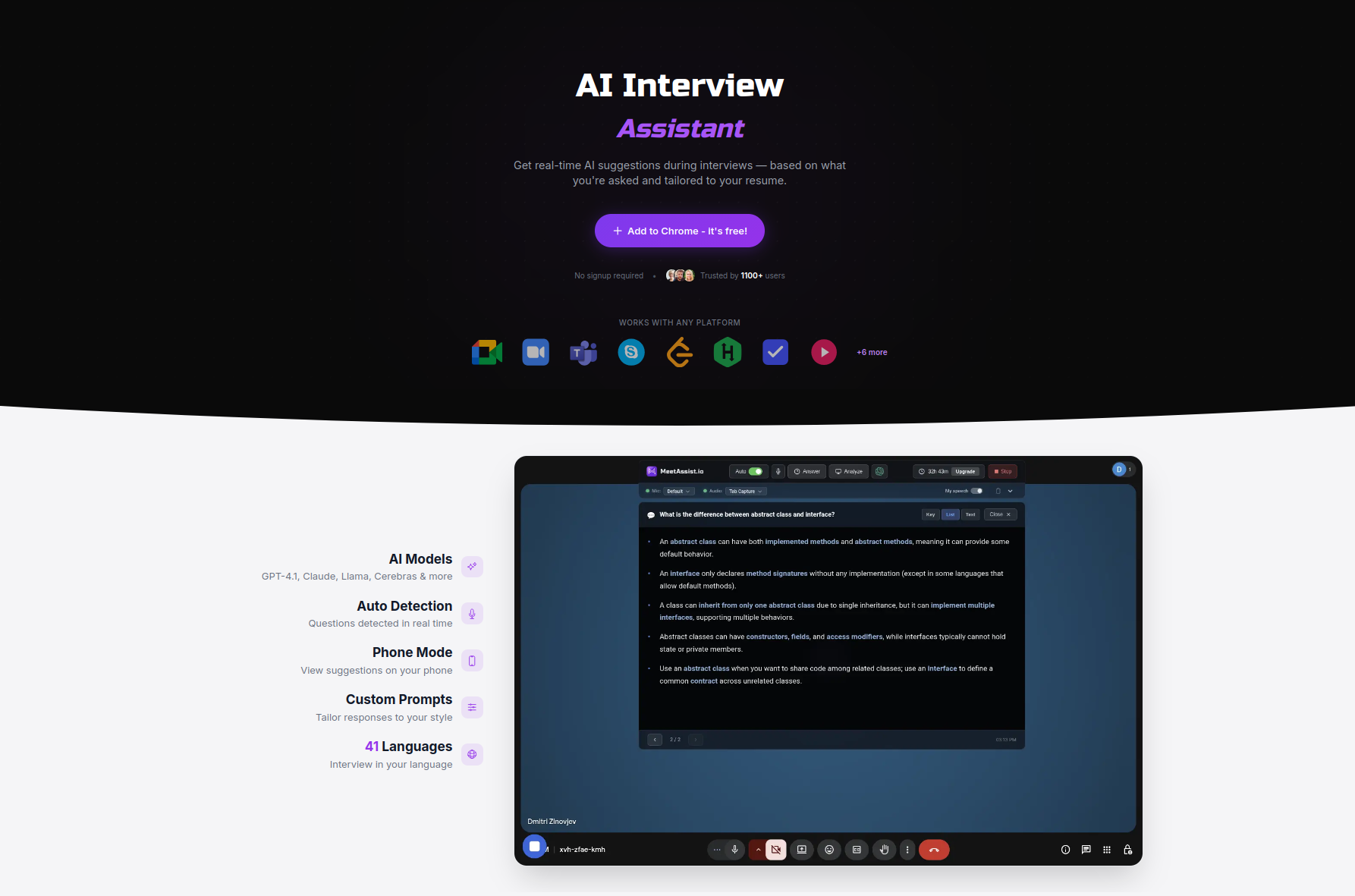

Explore MeetAssist’s privacy-conscious AI interview tools

Protecting your privacy during AI-assisted interviews doesn’t mean sacrificing the technological edge that helps you succeed. MeetAssist combines powerful real-time AI assistance with strong privacy safeguards designed specifically for technical candidates. The platform encrypts all data streams, records no audio or video, and offers Phone Mode that makes the extension completely invisible on your screen during interviews.

When you’re ready to compare AI interview assistants based on privacy features and functionality, explore MeetAssist alternatives to see how different tools stack up. The platform supports multiple AI models, customizable answer styles, and resume-based personalization while maintaining your data security. Learn exactly how to use MeetAssist to maximize your interview performance without compromising your privacy rights or personal information.

FAQ

Is it safe to share personal data during AI interviews?

Safety depends entirely on the platform’s privacy measures and your vigilance in reviewing policies and controls. Share data only when you trust the system’s encryption, retention policies, and access controls. Always verify that platforms comply with relevant privacy regulations and provide clear information about data handling before consenting.

Can I refuse AI analysis during my interview?

Some companies allow opting out of AI analysis or requesting manual interviews, but policies vary widely. You must clarify this option before your interview begins. Refusing AI analysis might limit your opportunities with companies that mandate automated assessments, so weigh this decision carefully based on your privacy priorities and career goals.

What questions should I ask about AI interview privacy?

Ask how your data is stored, who can access it, how long it’s retained, and whether you can delete it afterward. Also inquire about which AI models analyze your information, whether your data trains future algorithms, if third parties receive your information, and what happens to your data if you’re not hired. Request copies of privacy policies and data processing agreements.

How do privacy regulations affect AI interview tools?

Privacy laws like GDPR and CCPA impose standards on AI tools to safeguard candidate data, including requirements for consent, data minimization, and individual rights. However, enforcement and coverage vary by jurisdiction. Candidates should understand which regulations apply based on their location and the company’s location, then confirm that platforms demonstrate compliance through certifications, audits, or transparent data protection practices. Regulations give you legal recourse if your privacy rights are violated.